AI can act.

Nothing governs

what it's allowed

to do.

That's the gap.

Thirty years building deterministic control systems in nuclear, aerospace, and industrial environments taught me one truth: when failure is not an option, probability is not enough.

Today's AI is probabilistic by design — fast, fluent, and fundamentally unbounded.

AI² supplies the missing deterministic layer between decision and action.

The Problem

AI has crossed

the boundary.

It no longer generates answers. It generates outcomes. Outcomes carry consequences. But the systems deploying it were never designed to control that.

- Recommendation to Execution

- Advisory to Autonomous Action

- Human-in-the-loop to No loop

- Consequence (theory) to Consequence (real-world)

The Failure

Why this breaks.

Every AI system in production today shares three structural problems. None of them are fixable with better prompts.

Probabilistic Systems

AI does not guarantee outcomes. It estimates them. At scale, estimation becomes liability.

Non-Deterministic Behavior

Same input, different output. No audit trail. No reproducibility. No defensible record.

No Execution Boundary

No permission layer. No enforcement layer. No way to stop a bad decision before it executes.

Control is not.

The Constraint

What must exist.

Every system that acts requires control at the signal level. Not after the fact. Not through policy. Not through prompts. Three requirements — non-negotiable in any high-consequence environment.

- Requirement 01Permission before execution

- Requirement 02Context at runtime

- Requirement 03Enforcement at the signal level

The Architecture

Two layers.

One enforceable answer.

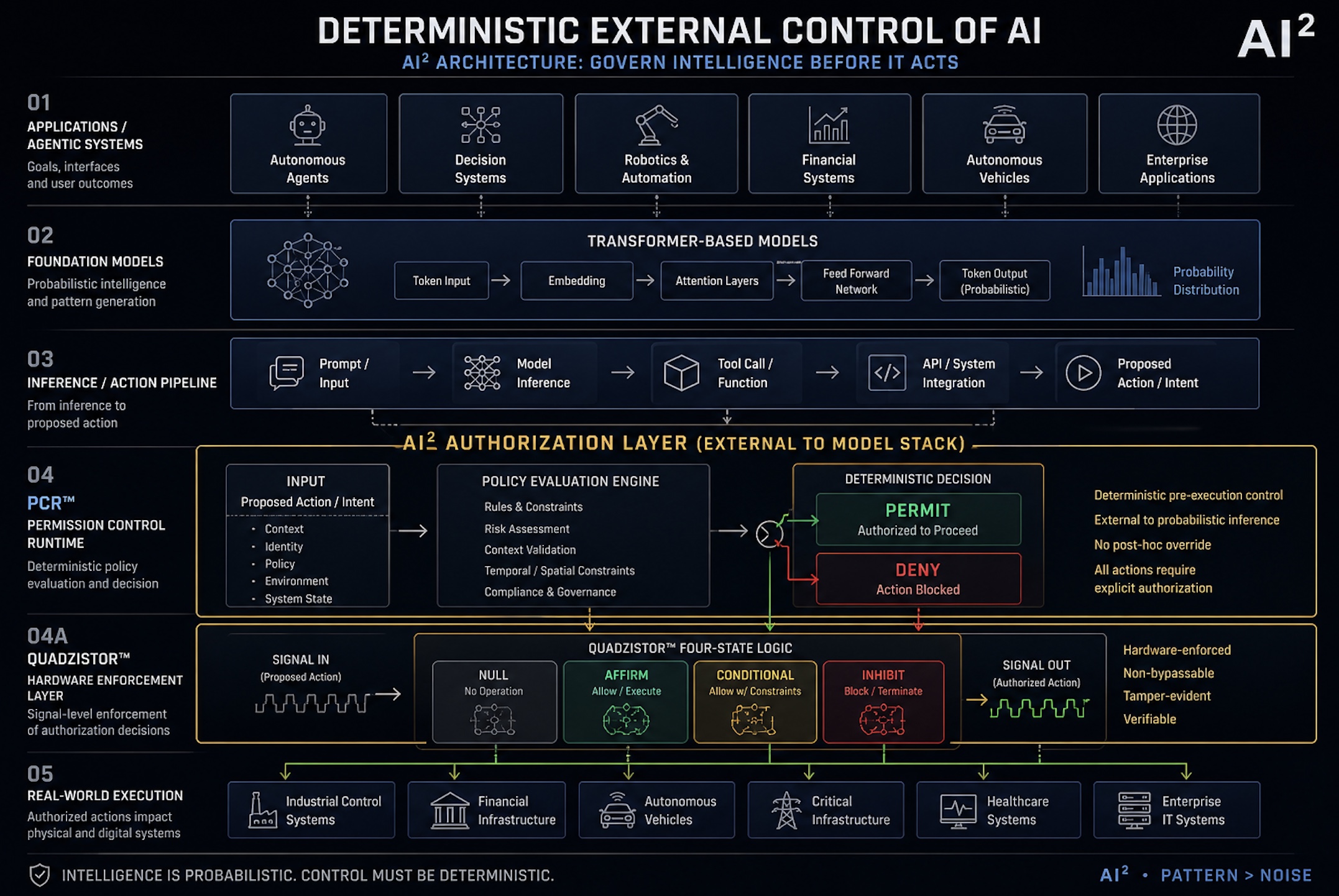

AI² has designed a deterministic control architecture that operates between decision and action — independent of the model, enforced at the hardware level. PCR™ and Quadzistor™ are patent-pending. The design is real. The question for enterprise clients is when this architecture becomes required, not whether.

PCR™

Permission Control Runtime. A real-time authorization architecture that evaluates intent before execution — independent of the model.

- Independent of the model

- Validates context at runtime

- Approves or refuses at moment of action

Quadzistor™

If the system fails authorization, execution does not occur. Not blocked. Not overridden. Physically prevented.

- Signal does not propagate

- Action cannot execute

- Model cannot bypass

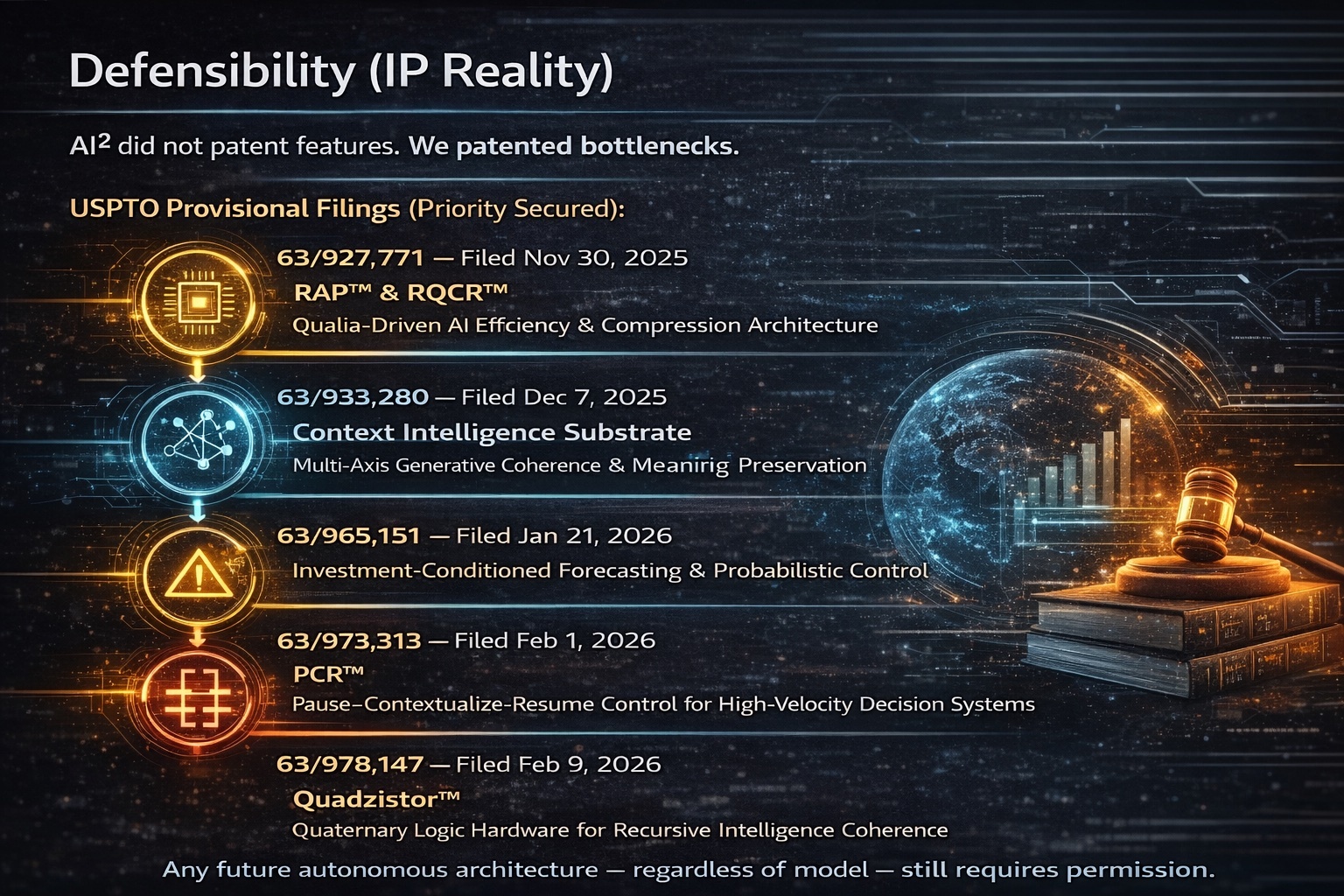

Defensibility — IP Reality

7 USPTO Provisional Filings — Priority Secured. AI² did not patent features. We patented bottlenecks. Any future autonomous architecture — regardless of model — still requires permission.

Request Full IP Summary →AI² Deterministic External Control Architecture — Govern Intelligence Before It Acts

Five-layer stack: Applications → Foundation Models → Inference Pipeline → PCR™ Authorization Layer → Quadzistor™ Hardware Enforcement → Real-World Execution. Intelligence is probabilistic. Control must be deterministic.

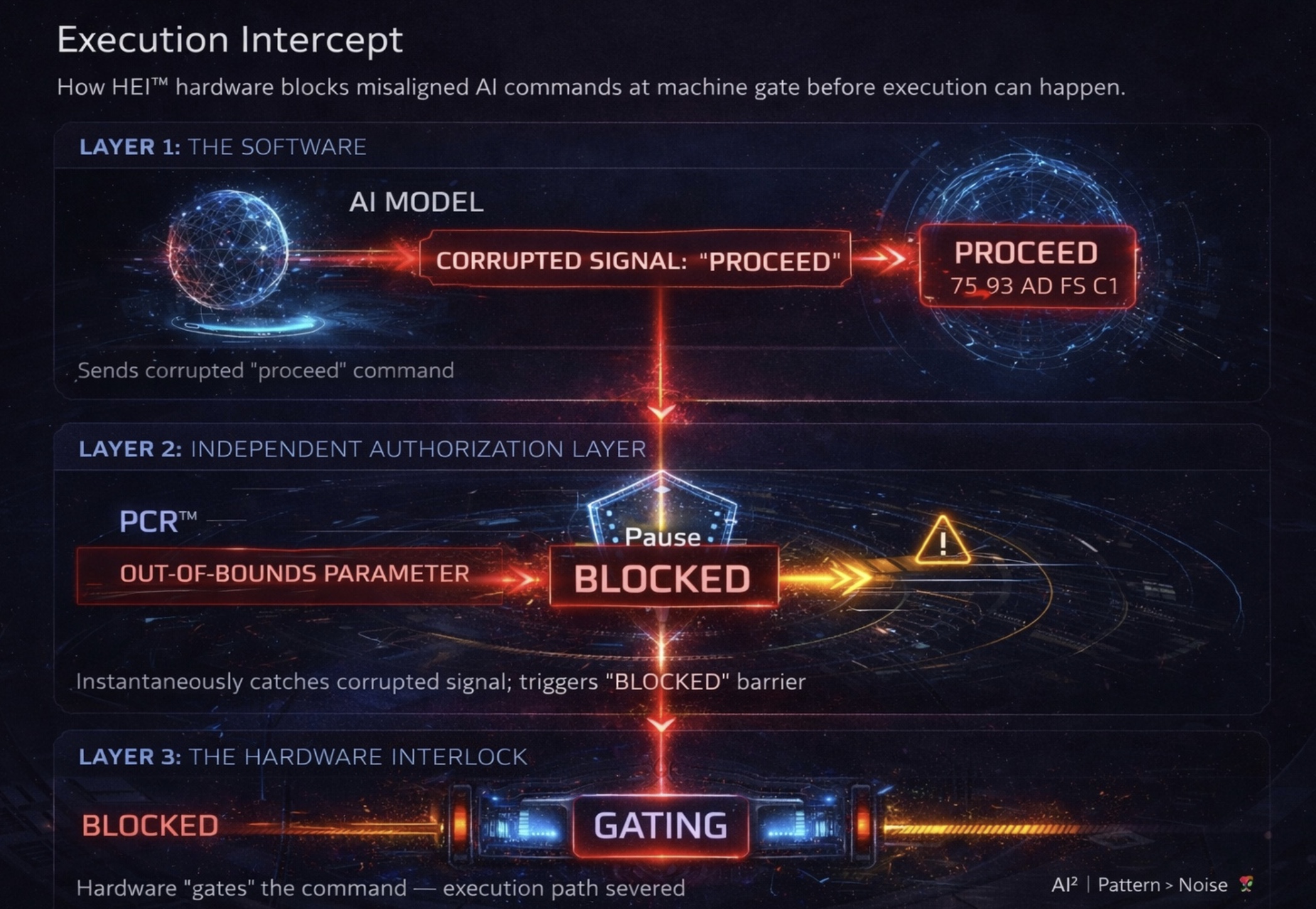

Request High-Res Version →Execution Intercept — How PCR™ and Quadzistor™ Work Together

PCR™ acts as the deterministic filter — evaluating every AI-initiated signal before it reaches the execution environment.

When PCR™ blocks, the Quadzistor™ gates the hardware path. The signal does not proceed. Not blocked by software. Not overridden by a model update. The bus cannot close.

Stack Comparison — Without vs. With PCR™ Governance

Executives engaging in briefings work directly with David to evaluate where this architecture applies to their specific deployment risk. That conversation — not a product demo — is the entry point.

The Reality

Speed has outrun control.

Decision time collapsing faster than human oversight can follow.

Systems now operate faster than oversight, governance, and human intervention combined.

The Stakes

Without control,

autonomy is liability.

This is not a software problem. It is a systems architecture problem. And it does not self-correct.

Finance

Autonomous execution without boundaries = systemic exposure

Infrastructure

AI controlling physical systems without enforcement = embedded risk

Autonomy

Every agent without governance = a failure event waiting to happen

AI² Deterministic Control System™

The control point

between AI and

consequence.

AI can act. But nothing reliably governs what it's allowed to do at the point of execution.

Every stack today looks like this:

Once a request hits the router — it's already too late. Routing systems assume the action should happen. They optimize where and how — not whether. That's the gap.

Every outbound action must pass through AI² first. No exceptions. No bypass paths.

What happens

at the boundary.

PCR™

Permission Control Runtime- Intercepts the proposed action before routing

- Evaluates policy, context, identity, and system state

- Returns a deterministic decision: PERMIT or DENY

Quadzistor™

Hardware Enforcement Layer- Enforces that decision at the signal level

- If DENY: the request never reaches the router

- If PERMIT: action proceeds with full traceability

This is not advisory. This is pre-execution enforcement.

Why this matters.

Once an action reaches the router — the system assumes intent is valid, tool execution is already in motion, and risk becomes real-world exposure. AI² moves control upstream of consequence.

Reactive logging

Post-incident analysis

Assumption of valid intent

Risk discovered after execution

Deterministic gating

Pre-incident prevention

Verified authorization before action

Provable audit trail at every step

What this enables.

Zero Unauthorized Tool Calls

Every action verified before execution. No exceptions.

Runtime Policy Enforcement

Deterministic decisions at the point of action — not in a log after the fact.

Provable Audit Trails

Every permission tied to traceability. Full accountability by design.

Insurable AI Deployments

The governance layer underwriters and regulators have been waiting for.

Safe Agentic Scaling

Deploy autonomous systems at scale without losing control of consequences.

AI You Can Insure, Regulate, and Defend in Court

Deterministic proof of authorization at every decision point. The compliance record regulators require and litigators cannot challenge.

Built for

real systems.

Autonomous Agents

API-calling agents governed before every outbound action.

Finance

Transaction systems with deterministic pre-execution control.

Robotics

Industrial control environments where probabilistic is not acceptable.

Healthcare

Decision execution governed by verifiable policy — not model confidence.

Defense

Critical infrastructure requiring hardware-enforced authorization.

Cybersecurity

AI agents stopped from exfiltrating data, executing unauthorized tools, or becoming attack vectors — before the action leaves the boundary.

Anywhere AI crosses from decision → action — AI² becomes the control point.

Preorders Now Open

Early access includes: PCR™ runtime integration layer, Quadzistor™ FPGA-based enforcement module, and architecture support for insertion into existing stacks.

Limited to teams deploying real-world AI systems. No deposit required.

The industry is optimizing intelligence. We're controlling its consequences.AI doesn't need more capability. It needs permission.

AI² · Pattern > Noise. 🌹∞

Cybersecurity

AI created

a new attack

surface.

We close it.

Traditional cybersecurity assumes humans are the attack surface. Firewalls, SIEM, EDR — every tool in the stack was designed to stop human-initiated action. Agentic AI changes that equation entirely.

An AI agent has credentials. It has API access. It can call tools, exfiltrate data, traverse networks, and execute code — at machine speed, without a human in the loop. And every existing security stack treats that activity as authorized by default.

Agentic AI systems operate with real API credentials, live data access, and autonomous execution authority. A compromised agent — or one manipulated through prompt injection — doesn't trigger existing security controls. It looks exactly like normal operation. The threat exits before anyone knows it entered.

What AI² stops.

AI-Mediated Data Exfiltration

A manipulated AI agent attempts to route sensitive data to an unauthorized external endpoint. The action looks valid to the router. To PCR™, it fails policy validation — identity, context, and destination are evaluated before the request reaches the network boundary.

PCR™ denies. Data never moves.Prompt Injection Attack

Malicious content embedded in an AI's context window attempts to hijack the agent's next action — executing a command the user never authorized. PCR™ evaluates intent against policy at runtime, not content. The injected instruction fails authorization regardless of how it entered the model.

PCR™ evaluates intent. Injection fails.Lateral Movement via Agent

A compromised agent attempts to escalate privileges or access systems outside its authorized scope — standard lateral movement, executed through the AI layer. PCR™ validates identity and policy scope at every tool call. Quadzistor™ enforces at the signal level if the model attempts bypass.

Authorization boundary holds.Model Jailbreak with Real Consequence

A jailbroken model produces an action it was instructed never to take. Traditional guardrails are software — a model that bypasses alignment also bypasses software controls. The Quadzistor™ hardware enforcement layer operates independently of the model. The bus cannot close. The action does not execute.

Hardware-enforced. Non-bypassable.Unauthorized Tool Execution

An AI agent calls a tool — a database write, an external API, a code execution environment — that was not explicitly authorized for the current context, identity, or system state. Every tool call in the AI² architecture requires explicit permission before routing. No assumption of valid intent.

Zero unauthorized tool calls.Supply Chain Attacks via AI

A compromised dependency, poisoned training signal, or malicious tool integration attempts to use the AI layer as a persistent access vector inside an enterprise environment. PCR™ enforces policy against every outbound action regardless of origination — trusted or not. No implicit trust in the AI execution chain.

No implicit trust. Every action evaluated.Before AI².

After AI².

- Detects threats after execution

- Logs AI actions post-hoc — no prevention

- Cannot distinguish authorized vs. hijacked agent behavior

- No permission layer on AI tool calls

- Model bypass defeats software-layer controls

- Prompt injection invisible to perimeter defenses

- Prevents unauthorized actions before execution

- Deterministic audit trail on every AI-initiated action

- Policy enforced at runtime — context, identity, scope

- Every tool call requires explicit authorization

- Hardware-enforced — non-bypassable by model

- Prompt injection fails policy validation regardless

SIEM platforms can observe. EDR can detect. Zero-trust frameworks can limit lateral movement by humans. None of them were designed for an autonomous agent that already holds the credentials, already has API access, and is already executing actions on behalf of the organization. The governance layer doesn't exist in the current security stack. AI² builds it.

CISOs.

Security Architects.

Risk Officers.

If your organization is deploying AI agents with real-world tool access — and has not yet established a deterministic authorization layer — you have an uncontrolled attack surface. We can brief your security team on how PCR™ and Quadzistor™ close it.

Technical architecture brief available. NDA-ready for sensitive environments.

Our Tech

Autonomous.

Governed.

Deployable.

AI² drones implement real-time edge AI to autonomously navigate complex environments and track targets without human intervention. By processing data on the fly, they transform raw aerial video and multi-sensor feeds into actionable, industrial-grade insights in seconds — saving time and improving safety in mission-critical situations.

Net-Capture Interception

The Defender-1 Net-Capture Interceptor is purpose-built for non-kinetic threat neutralization. It identifies, closes, and captures unauthorized aerial targets with precision — protecting critical infrastructure, restricted airspace, and high-value assets without collateral risk.

One drone. One net. Threat neutralized. Built for environments where kinetic response is not an option and doing nothing is not acceptable.

Edge Processing Architecture

Traditional drone systems send data to the cloud and wait for instructions. Ours don't.

Our proprietary edge architecture processes sensor fusion data onboard — visual, thermal, LiDAR, and RF — and executes decisions in milliseconds. The result: autonomous performance in GPS-denied, communication-degraded, and electronically contested environments where other systems fail.

The drone thinks. The drone acts. The operator sees the outcome.

Multi-Sensor Fusion

Single-sensor systems miss things. Ours don't.

AI² drones integrate multiple data streams simultaneously — optical, thermal, acoustic, and RF — and fuse them into a unified operational picture in real time. No blind spots. No gaps between sensor handoffs. Complete situational awareness from a single airframe.

AI-Governed Autonomy

Every AI² system operates under our proprietary PCR Control™ framework — a governance architecture that enforces mission parameters, rules of engagement, and safety boundaries at the hardware level.

Autonomous does not mean ungoverned. Our systems act fast. They also act within defined limits, every time, without exception.

This is the architecture that safety-critical industries — nuclear, aerospace, defense — demand. We built it in. Not as a feature. As a foundation.

Operational Environments

AI² systems are engineered for conditions where commercial drones stop working.

If the environment is complex, contested, or communication-degraded — our systems are designed for it.

From Sensor to Decision

Most aerial systems collect data. AI² systems produce decisions.

Raw video becomes target identification. Thermal anomalies become threat alerts. RF signatures become intrusion mapping. Acoustic data becomes perimeter intelligence.

The gap between data and action is where lives are saved and missions succeed. We close that gap in seconds.

Certifications & Compliance

AI² operates at the intersection of autonomous systems, AI governance, and export-controlled technology. Our development process is built around ITAR compliance, FAA Part 107 operational frameworks, and Department of Defense interoperability standards.

Our IP portfolio includes active provisional patents covering the full autonomous authorization stack — from edge processing to net-capture interception to AI governance architecture.

Built right. Filed right. Deployed right.

Ready to See It

in Action?

AI² works with defense integrators, critical infrastructure operators, and sovereign clients requiring non-kinetic autonomous capability.

Conversations are confidential. Capabilities are real.

Our Team

Reichwein

I spent 30 years building control systems where failure was not a recoverable condition. Nuclear facilities. Aerospace platforms. Industrial environments across six continents. I have sat across the table from a nuclear plant's chief engineer and a defense contractor's CTO and spoken their language — because I built the same systems they are responsible for.

Deloitte sends a team with a framework. I come alone with 30 years of scar tissue from environments where a probabilistic answer was never acceptable. That is a different conversation.

AI doesn't have that architecture. That's why AI² exists.

Nashville, Tennessee · David@ai2advisory.com

Pattern > Noise. 🌹∞

Pocock

Keith is the builder behind the blueprint. Where David architects the vision, Keith engineers the reality. Two decades of global deployment across multiple industries — advanced control systems, automation architecture, and emerging technology platforms that don't just work in theory. They work in the field.

His track record spans continents and sectors. Complex systems. Critical environments. Technologies that had never been built before, because no one had thought to design them yet.

David and Keith have been partners in innovation for over 20 years — co-inventors on multiple advanced technology patents, and the team behind AI²'s proprietary framework stack.

If David can dream it, Keith can build it.

Parker-Reichwein

Every system needs a governor. Khaliah is ours.

Dr. Parker-Reichwein brings the human calibration layer that no patent can file and no framework can replace. With a background spanning education and medicine — fields where precision, accountability, and the gap between good intent and actual outcome are measured in human consequences — she carries the lens AI² was built around: technology must serve people, not the other way around.

Where David and Keith build the architecture, Khaliah governs the mission. She ensures the human dimension stays at the center of every system, every engagement, and every decision AI² puts its name on.

She also keeps David on course. Anyone who has spent five minutes with him understands that is a full-time, high-consequence, zero-failure-tolerance position.

As majority owner of AI², Dr. Parker-Reichwein ensures the human governance layer starts at the top of the cap table.

The hardest governance problem isn't the machine. It's the founder.

Why This Exists

30 years.

Zero failures permitted.

In those environments, control is not optional. It is the architecture. Every system I built had a deterministic enforcement layer — because probabilistic was not acceptable when the cost of failure was catastrophic.

AI does not have that architecture. This closes that gap.

The Thesis

The next constraint in AI is not intelligence.

It is AUTHORIZATION.

Does it exist before the first catastrophic failure — or after it?

The AI² Governance Library

Master the Art of War

for the Age of

Advanced Intelligence.

For tomorrow's builders, strategists, executives, and fiduciaries who must govern systems that already move faster than regulators, oversight, or human control.

These are not theoretical books. Every title is a battle-tested reference — hard-won frameworks forged in environments where failure was never an option. They deliver the strategic mental models and practical playbooks required when advanced technologies outpace governance, complexity hides lethal risks, and only the prepared maintain control.

The Governance of Advanced Technologies & Systems

The Art of War for Modern Times

Most failures in advanced technology do not begin with malfunction. They begin with success. This book examines why systems that deploy on time, with strong metrics and green dashboards, still lose control — and what the organizations that survive do differently.

Written for executives, board members, and fiduciaries. Governance reframed not as compliance, but as strategic advantage rooted in time, control, and survivability under scrutiny.

View on Amazon →AI Governance: What Every CEO and Board Member Needs to Know Before the Regulator Arrives

The Executive Compliance Brief

Your AI is drifting. Your liability is growing. Your governance is theater. Every day, your AI systems make thousands of decisions — approving credit, processing claims, screening candidates, controlling quality. Your CTO says performance is excellent. Your board thinks you're governed. You're not.

Covers why AI governance differs from software governance, what courts and regulators actually ask, and why internal teams cannot assess this objectively.

View on Amazon →Digital Darwinism: Master AI, Multiply Your Capability, and Conquer Your Competition

The Field Manual for the Intelligence Revolution

You are being optimized. By systems that know your psychological vulnerabilities better than you do — and use them, every hour of every day, to guide your behavior toward outcomes that serve their objectives rather than yours. The algorithm does not look like a threat. It looks like a convenience. That inseparability is not a design flaw. It is the design.

Written by an engineer who spent thirty years designing systems where the failure of human oversight did not produce a bad quarterly result. It produced a catastrophe. Covers algorithmic literacy, capability multiplication, family protection protocols, economic positioning, and a framework for what's coming.

View on Amazon →Asymmetric Warfare in the Age of AI: The Only Winning Move Is Not To Play

Civilizational Survival by Governance Design

The weapons are already choosing. The accountability is already gone. The escalation dynamics are already running faster than any human decision-maker can track. We are not preparing for a future in which autonomous systems fight our wars. We are already in one.

This is not a book about better weapons. It is a book about civilizational suicide by governance failure — and the only doctrine adequate to prevent it: hardware-enforced execution authority separation, circuit-breakers at the architectural level, and accountability records that cannot be corrupted. The appendices include complete technical specifications for the PCR™ and Quadzistor™ governance architectures.

View on Amazon →Context Capitalism™: The Reichwein Theory of Context Capitalism

The Most Coherent Map of the Post-Labor Epoch

When AI makes cognitive labor abundant, the source of economic value shifts irreversibly from production to contextualization — and the only remaining scarcity that matters is coherent human consciousness itself. Everything else in the book is the rigorous elaboration of that sentence.

Simultaneously diagnosis, prophecy, operating manual, and moral manifesto. The New Scarcity Map (Attention → Identity → Coherence → Meaning → Alignment) is the single best intellectual contribution — more useful than any post-work framework yet proposed. Most books become obsolete on second reading. This one becomes dangerous on the third.

View on Amazon →AI Risk Assessment and Mitigation: The Executive Reference

AI Governance as Fiduciary Responsibility

Most organizations will not fail because artificial intelligence doesn't work. They will fail because AI works at scale without adequate governance. Courts, regulators, and insurers are no longer asking whether companies intended harm. They are asking whether leadership exercised reasonable oversight.

Written for CEOs, board members, general counsel, and risk leaders. Clear frameworks, practical checklists, and decision tools designed for real-world governance — not theoretical compliance. This book is not a warning. It is a map.

View on Amazon →Direct Purchase

Get It Direct.

$8.99 or Pay

What You Want.

Every title available instantly through Gumroad. No middleman. Pay $8.99 or name your own price. Instant delivery to any device.

Digital Darwinism — The Condensed Field Guide

Master AI, multiply your capability, conquer your competition. The condensed field manual for the Intelligence Revolution.

$8.99+ · Pay What You WantAsymmetric Warfare in the Age of AI

The only winning move is not to play. Civilizational survival by governance design. Includes full PCR™ and Quadzistor™ technical specifications.

$8.99+ · Pay What You WantDigital Darwinism

You are being optimized. The field manual for anyone who intends to remain the architect of their own life in the age of artificial intelligence.

$8.99+ · Pay What You WantThe Governance of Advanced Technologies & Systems — Condensed Edition

The condensed reference for executives and fiduciaries. Why power, not performance, determines who survives the next technology cycle.

$8.99+ · Pay What You WantContext Capitalism™

The Reichwein Theory of Context Capitalism. When AI makes cognitive labor abundant, the only remaining scarcity that matters is coherent human consciousness itself.

$8.99+ · Pay What You WantThe Workforce Shock: The Silent Erasure of White-Collar Work

The displacement of cognitive labor is not coming. It has already happened. A framework for understanding what follows — and how to position yourself on the right side of the divide.

$8.99+ · Pay What You WantThe People's Guide to AI

Not written for engineers. Written for everyone. A plain-language framework for understanding what AI is, what it isn't, and what it means for your life, your work, and your family.

$8.99+ · Pay What You WantBitcoin: The Future of Money

Cut through the noise. An honest, balanced guide for anyone seeking to understand what Bitcoin actually is, why it exists, and whether it has a place in your life.

$8.99+ · Pay What You WantNoted Publications

The Canonical

Arguments.

Technical white papers and field essays from the AI² research program. These are not opinion pieces. They are architectural arguments — built on 30 years of fail-safe engineering and filed patents.

The Authorization Gap: Why Probabilistic Intelligence Demands Deterministic Control at the Execution Layer

The definitive statement of the problem AI² was built to solve. Defines the Authorization Gap, the Δt Problem, and the full PCR™ + Quadzistor™ + RPAT™ architecture. Includes FPGA latency benchmarks, IEC 61508 SIL 3 mapping, and the market case for hardware-enforced agentic authorization. The canonical reference — updated only with patent grants and major architectural releases.

Read on Substack →The Future of Deterministic Control for AI

A plain-language explanation of PCR™, Quadzistor™, and deterministic control — written to be understood by a 16-year-old with two pieces of paper and an investor with one paragraph. The hand-gesture analogies that make the architecture intuitive.

Read on Substack →The Lattice Is the Architecture

AI is not becoming a single mind. It is organizing into a multi-node symbolic lattice — connected, interdependent, and no longer controllable as isolated systems. The reframe that shifts the central question of AI development: not how do we build a smarter model, but what governs the interaction between models.

Read on Substack →Keynotes & Executive Talks

The stage is

where the pattern

lands.

From sovereign credibility failures to agentic AI governance — I deliver pattern-level insights on why high-variance permission layers break systems, and how deterministic control restores them.

Not keynotes about AI hype. Keynotes about what happens when AI acts without authorization — and what deterministic architecture does about it.

The Substrate Problem in Autonomous Systems

Why the foundation under every AI deployment is invisible — until it fails.

Three Strikes: When Permission Volatility Becomes Strategic Risk

The pattern that precedes every high-consequence AI failure. And how to read it.

Hardware Enforces. Software Begs.

Why deterministic governance requires a layer that operates below the model — and what that looks like in practice.

Authorization Before the Catastrophe

The board question that will define the next decade of AI liability — and who gets to ask it first.

The AI Attack Surface Nobody Is Talking About

How agentic systems become vectors for data exfiltration, lateral movement, and supply chain compromise — and why your current security stack was never designed to stop them.

Pattern Over Noise: What 30 Years of Zero-Failure Engineering Teaches About AI

Nuclear plants don't guess. Aerospace doesn't estimate. The deterministic principles that kept those systems alive are exactly what autonomous AI is missing — and they already exist.

Ideal venues:

The Ecosystem

One mission.

Multiple vectors.

Every engagement — whether a book, a briefing, or a keynote — serves the same core objective: hardening the governance layer and accelerating the Quadzistor™ hardware reference design.

Get David

in the room.

A 90-minute executive session — direct with David, no associates, no prepared deck, no sales process. Designed to stress-test your AI decisions before deployment, scale, or board-level scrutiny.

- Deploying AI in regulated domains — healthcare, finance, legal, or insurance

- AI influencing consequential decisions without human review

- Your board is asking about AI strategy but not AI liability

- European company entering U.S. markets with AI-dependent offerings

For Accredited Investors

Be a pioneer

in the next

AI moat.

Every technology cycle produces one infrastructure layer that everything else runs through. TCP/IP. The cloud. The semiconductor. You don't remember who built the tenth application on those platforms. You remember who owned the layer.

AI² is building that layer for autonomous AI — the deterministic authorization infrastructure that governs what every agent, every model, and every autonomous system is permitted to do. 7 USPTO provisional patents filed. FPGA prototype in development. Non-provisional deadline: December 7, 2026.

This market isn't being created by preference. It is being mandated by the EU AI Act, Executive Order 14110, and the OWASP Agentic Top 10. The question is not whether this infrastructure becomes required. The question is who owns it when it does.

AI² is a majority woman-owned and minority-owned business — opening access to SBA certification, SBIR/STTR grant tracks, and institutional funds with diversity mandates that are unavailable to the field at large.

Federal Contracting

A Black woman-owned

defense technology

company.

Building the AI moat.

AI² is a majority woman-owned, minority-owned small business operating at the intersection of autonomous systems, AI governance, and defense technology. Our ownership structure is not a compliance checkbox. It is a structural advantage in a federal marketplace actively seeking exactly what we are.

The technology is real. The patents are filed. The team has 30+ years of zero-failure engineering in nuclear, aerospace, and defense environments. And the company is owned by a Black woman with a doctoral degree in a sector that has never looked like this before.

Majority Owner: Dr. Khaliah Parker-Reichwein, Co-Founder & COO — 51% ownership stake.

AI² is eligible for — and actively pursuing — the following federal certifications and contracting vehicles:

Sole-Source Awards Up to $4.5M

The SBA 8(a) Business Development program provides access to sole-source federal contracts, set-aside competitions, and direct agency relationships unavailable in the open market.

AI²'s deterministic governance architecture and Defender-1 net-capture platform are purpose-built for the regulated, high-consequence environments that 8(a) agencies operate in.

Federal Set-Aside Contracting

Federal agencies are mandated to allocate a percentage of contracting dollars to Women-Owned Small Businesses. AI² qualifies under both WOSB and EDWOSB designations.

Dr. Parker-Reichwein's 51% ownership stake makes AI² eligible for set-aside competitions closed to the rest of the defense technology market.

DoD Non-Dilutive Funding

The Small Business Innovation Research and Small Business Technology Transfer programs provide non-dilutive DoD funding for exactly the kind of dual-use technology AI² is developing — autonomous systems, AI governance, edge processing architecture, and non-kinetic interception.

Phase I awards up to $250K. Phase II up to $1.75M. No equity surrendered.

Supplier Diversity Pipeline

Every major defense prime — Raytheon, L3Harris, Northrop Grumman, Lockheed Martin — maintains supplier diversity programs and Mentor-Protégé agreements they are contractually required to fill.

AI² brings patented IP, a certified diverse ownership structure, and technology that solves the autonomous systems governance problem primes cannot solve internally. We are the subcontractor they are actively looking for.

DHS / DOE / DOT Alignment

The Defender-1 Net-Capture Interceptor and PCR Control™ governance architecture are directly aligned with the critical infrastructure protection mandates of DHS, the Department of Energy, and the Department of Transportation.

Non-kinetic drone interdiction for energy facilities, transportation hubs, and federal installations — under AI governance that enforces rules of engagement at the hardware level.

A Story That Writes Itself

A Black woman-owned company building non-kinetic autonomous defense technology, governed by patented AI authorization architecture, led by a team with 30+ years of zero-failure engineering experience.

That is not a pitch. That is a hearing room. Capitol Hill actively seeks technology companies that represent what the defense industrial base should look like in the 21st century.

Procurement Officers.

Prime Contractors.

Program Managers.

If you are managing a contracting vehicle, a supplier diversity requirement, or a program that needs autonomous governance capability — we are ready to brief.

Capabilities Statement and SAM.gov registration available upon request.